How to Fine-Tune BERT for Text Classification 论文笔记

Nov 13, 2019

BERT在NLP任务中效果十分优秀,这篇文章对于BERT在文本分类的应用上做了非常丰富的实验,介绍了一些调参以及改进的经验,进一步挖掘BERT的潜力。

Read

Attention is all you need 论文笔记

Nov 13, 2019

本文主要讲述Self-Attention机制+Transformer模型。自己看过论文与其他人文章的总结,不是对论文的完整翻译。

Read

QANet 论文笔记

Nov 13, 2019

QANet: Combining Local Convolution With Global Self-Attention For Reading Comprehension

Read

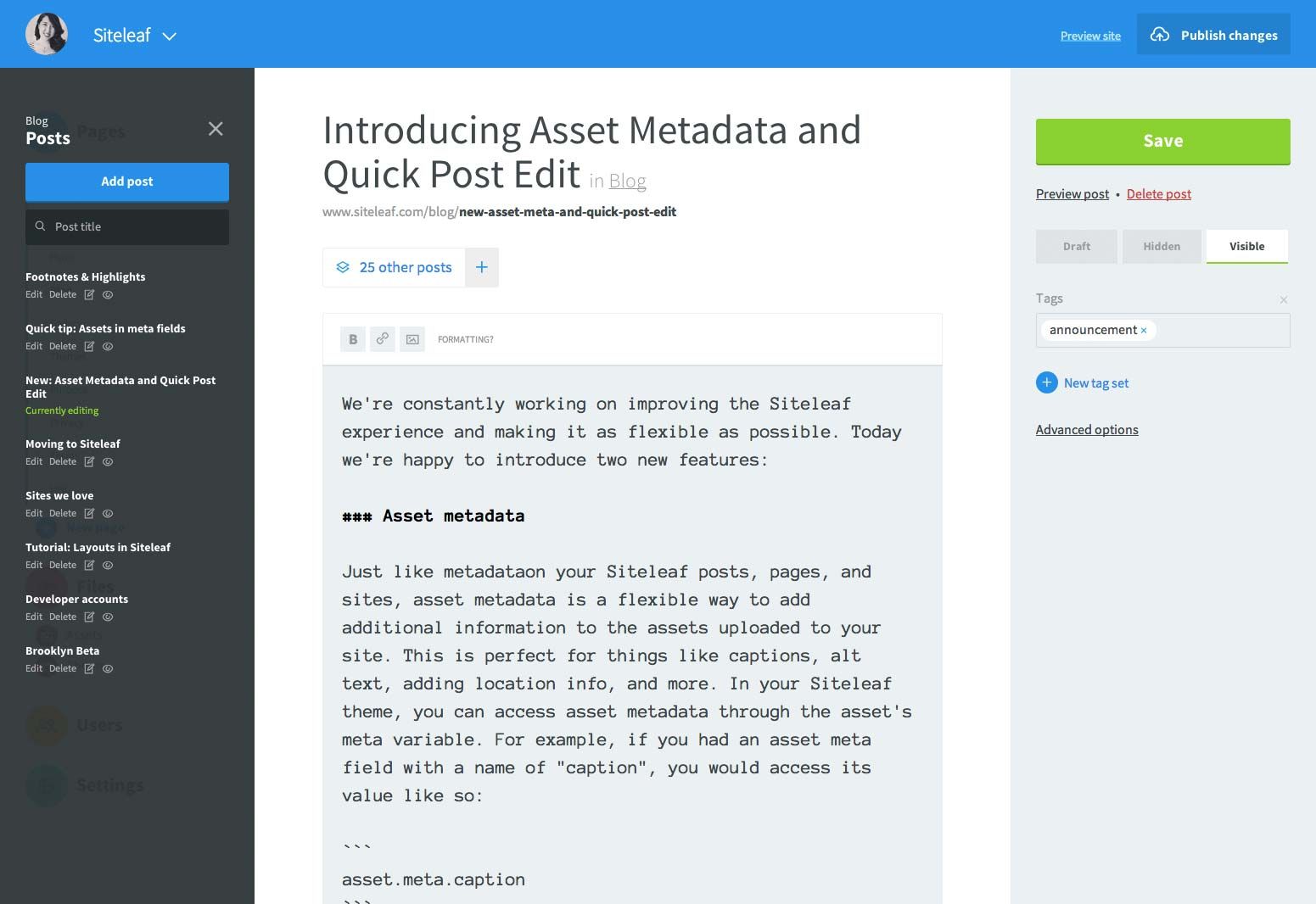

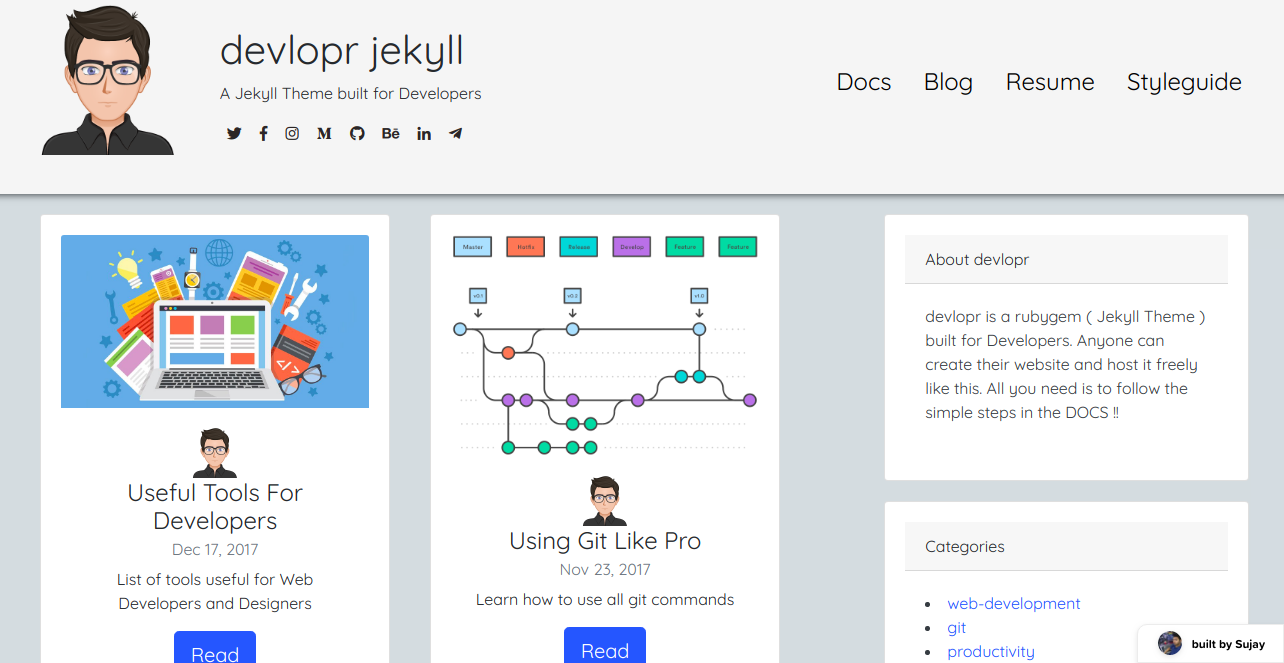

Using Siteleaf CMS with devlopr-jekyll Blog

May 22, 2019

Use Siteleaf CMS for your devlopr jekyll blog

Read

How to deploy devlopr-jekyll Blog using Github Pages and Travis

May 21, 2019

Deployment Guide for devlopr-jekyll blog using Github Pages and Travis CI

Read

Build and Deploy a blog using devlopr-jekyll and Github Pages

May 20, 2019

Getting Started - How to build a blog using devlopr-jekyll and Github Pages

Read About

Hi, My Name is Hao Sun. I love machine learning and NLP.

Follow @sigmeta